Good morning and happy Monday!

AI is kicking off the week with big moves. Perplexity, the fast-growing AI search startup, wants to buy TikTok and open-source its infamous algorithm. Meanwhile, OpenAI and MIT say only ChatGPT power users get emotionally attached. And over in video land, a new open-source model called Wan2.1 is giving Hollywood vibes, on a home GPU. Here’s what’s going on and why it matters.

📱 Perplexity, AI Startup, Eyes TikTok Takeover Deal

Perplexity, an AI search firm, wants to buy TikTok and rebuild its algorithm as open-source. The company argues this approach avoids monopolies, ensures U.S. oversight, and enhances transparency. Their vision includes a seamless transition and real-time recommendations.

Perplexity’s plan is unclear on whether it wants to make the TikTok “For You” feed open-source or house data in U.S. facilities. ByteDance must sell TikTok by April 5 due to U.S. security concerns, and other bidders like Oracle are in play.

If successful, Perplexity’s bid could reshape how social platforms work, prioritizing transparency over secrecy. It positions the U.S.-based firm as a non-monopolistic alternative to tech giants, with potential to set new norms for data privacy and content recommendation.

❤️ Only Super Users Call ChatGPT BFF

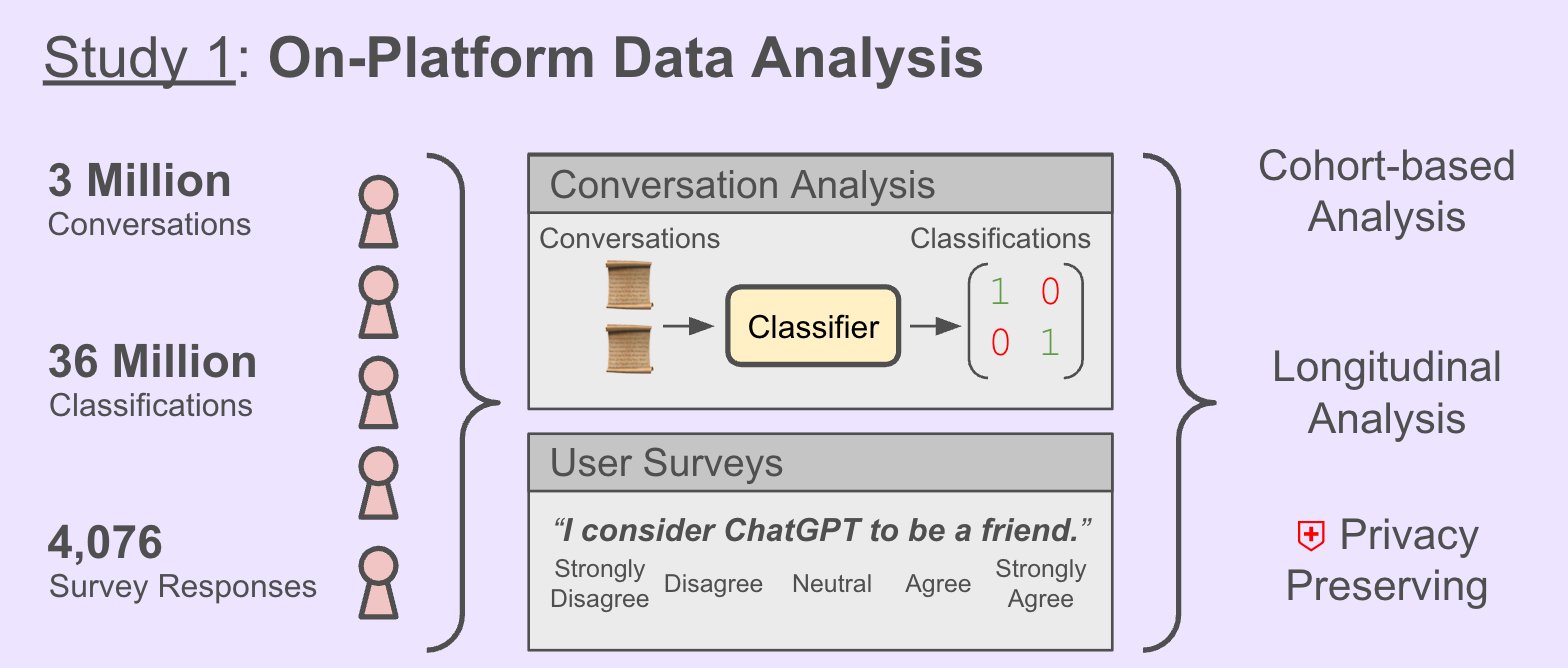

Emotional chats with ChatGPT are rare, says a new study by OpenAI and MIT. Most people use the chatbot for tasks or fun, not deep emotional support. A small group of heavy voice users showed stronger emotional ties, some even called ChatGPT a “friend.”

One study looked at 40M chats and the other one ran a 4-week trial with 1,000 users. Voice use helped mood in short bursts but hurt well-being with longer use. Personal chats raised loneliness but lowered dependence. Users’ own views and habits shaped outcomes.

As AI becomes part of daily life, this research helps shape safer tools by showing who’s most at risk from emotional use. By blending real-world data and lab tests, the study offers a clearer view of AI’s social impact, and where to go next.

🎥 A New Open-Source Video Generation Beats SOTA

Wan2.1, a new open-source video model suite, sets a new bar in video generation. It beats top commercial and open models, handles tasks like text-to-video and editing, and runs on consumer GPUs. It’s also the first to generate readable Chinese and English text in videos.

Wan2.1 uses just 8.19 GB VRAM, runs on GPUs like the RTX 4090, and generates 480p clips in minutes. It also includes a powerful Video VAE for encoding high-res, long-form content while keeping motion smooth. Although it’s a small distilled model with 14B parameters, it reaches state of the art (SOTA) in many benchmarks.

Wan2.1 brings pro-level video AI to the masses. Its speed, quality, and broad task support make it a game changer for creators, developers, and researchers. By staying open and efficient, it pushes video AI closer to everyday use.